Builder robot 1.0

12.18.2017

I am working towards a future where an architect could design a building and send the 3D model to a group of mobile robots that self-organize to build directly from that model. As the robots start construction, the architect could observe the process, realize that she wants to make changes to the design, update the model, and resend the changes to the robots. They would immediately switch to the new model and make any updates necessary. By developing teams of robots that can collaboratively work together to build large structures, we could also aid in disaster relief, enable construction in remote locations, and support the health of construction workers in hazardous environments. In addition, this technology would greatly contribute to the architecture, engineering, and construction (AEC) industry in speed and customization.

If we are to push the boundaries of robotic fabrication, then we need to create collaborative robots that can work together to plan and build large structures. The robots could be a diverse group with many different functions and skills between them. Some could lay rebar, while others could pour and print concrete. Others may need to work together to detail hard to reach places. Rather than making one or two huge expensive robots, we could utilize a dozen smaller robots that could combine their abilities to achieve much more than one could alone. In essence, they would work together to build the physical manifestation of a digital model, responding to changes in the environment, updates to the model, or surprises in the material.

current research

I am currently taking a first step towards this future by developing a small group of robots that learn how to work together to solve a puzzle. I am building and programming robotic agents, in both the simulated and real world, that can assemble a small puzzle. The ambition is to have a group of robots dynamically work together and collaborate to achieve something that they could not do alone. In this stage, I focus on the interaction between the robots over the robots themselves. Rather than taking an explicit planning approach, I explore an area of research in artificial intelligence called reinforcement learning (RL). Drawn from behavioral psychology, reinforcement learning is a machine learning approach where agents learn an optimal behavior to achieve a specific goal by receiving rewards or penalties for good and bad behavior, respectively. By altering the reward structure, I can evoke different behaviors between robots - for example adversarial versus collaborative. This enables me to find emergent behavioral patterns that are ideal for collaborative assembly processes.

This form of collaboration would lead to a more flexible system, where robots would not be limited to their individual abilities, but those pooled as a group. This research addresses the limitations of individual robots in large-scale construction including the inflexibility of robotic systems in dynamic environments. The anticipated contributions of this research include: building a team of multiple collaborative construction robots, evaluating existing robotic interaction, implementing a novel collaborative behavior between robots (in both simulation and real world), and evaluating reinforcement learning algorithms for methods of collaborative assemblies with multi-robot teams.

phase 1.1: build robotic agent

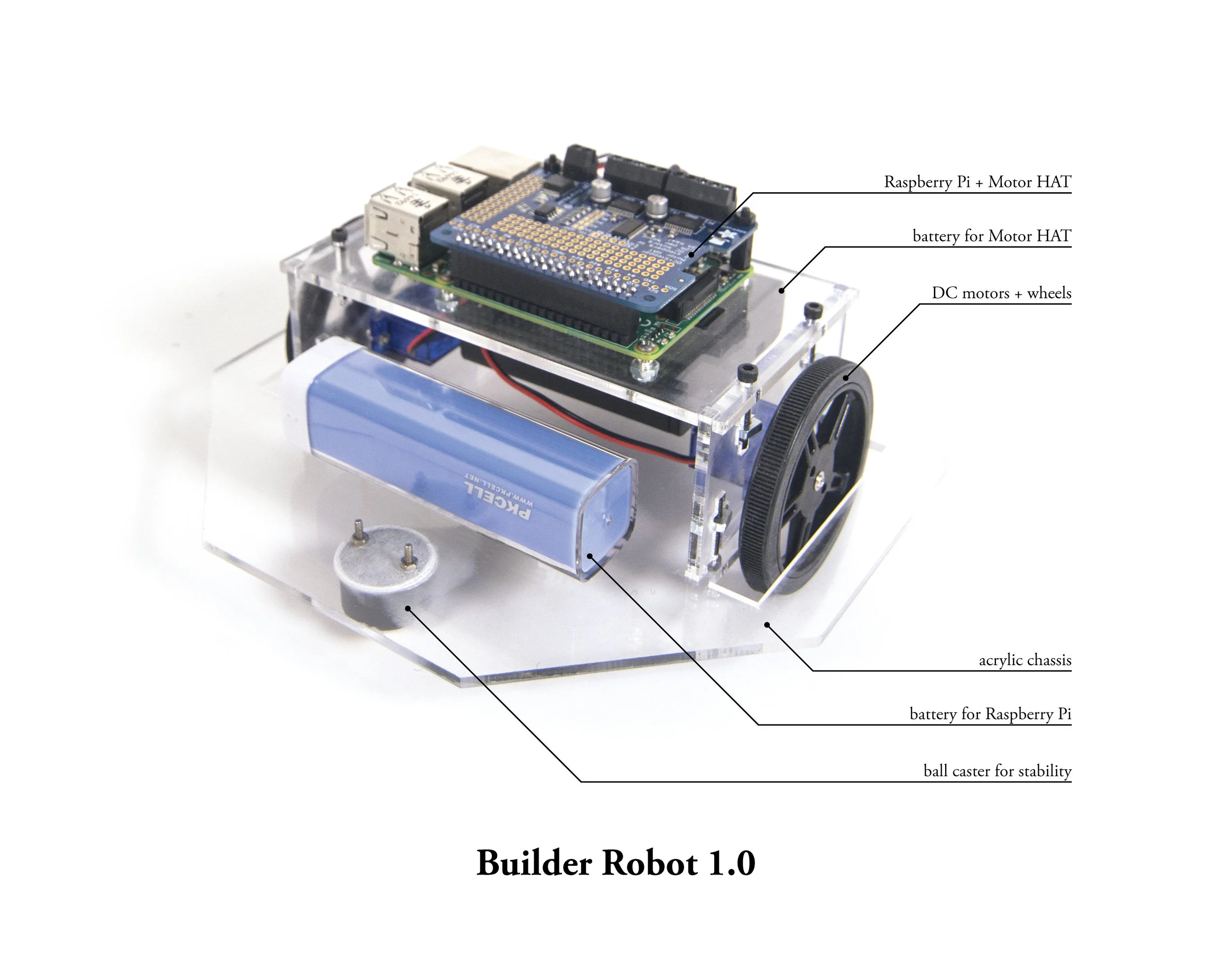

In the first phase of this research, I am developing a single robotic agent, in both the simulated and real world, that can assemble a small puzzle. Here, I present the first iteration of the robot, Builder Robot 1.0, and the lightweight blocks it will assemble. The aim is to make the simplest robot that is capable of learning and exhibiting collaborative behavior. This first robot is 6” in diameter, and it is controlled by a Raspberry Pi 3, a tiny computer the size of a credit card, enabled with bluetooth and wifi. In this first phase, the robot does not have any armatures and interacts with its environment solely through pushing around blocks with its body. The blocks are made from a lightweight extruded polystyrene foam for ease of movement. An overhead camera provides the robot with global positioning information.

The robot shown above is manually controlled remotely through a custom iPhone interface. The video demonstrates the simple control and abilities of Builder Robot 1.0.

Phase 1.2: Single Simulated Agent

In addition to the physical robots, I am developing a simulation for training the robotic agents. I have defined each state to be composed of information about each block. It contains each block’s current location (x and y coordinates), rotation angle, and a boolean telling whether it is in its final location. When all blocks are in their final position (ie. every boolean is True), the episode is complete. I will use an overhead camera along with shape recognition and centroid calculation to determine the block information. The final location is determined from the input model. At the beginning of each episode, the blocks are randomly placed around the work area. At each state, the robotic agents each take two actions, velocity and turning angle. At each time step, the agents receive a living penalty (e.g., -0.5). For each block placed, the agents receive a small reward (e.g., 10), which in turn is removed if the block moves out of place. When all blocks are in their final location, the episode is complete and the agents receive a significant reward (e.g., 400).

Concept diagram of Builder Robots working together to assemble a puzzle. Each state (s) is composed of information about the blocks. The robotic agents take actions (v: velocity and w: turning angle) at each state in order to achieve the common end goal of completing the puzzle.

For my first simulation, I designed the environment in Blender, an open source 3D modeling environment. This simulation, show below, is controlled through the Modular OpenRobots Simulation Engine (MORSE) and is manually controlled to test the environment. While setting up the environment for RL, I ran into some key issues in resetting the environment for training. Because of this keystone issue, I am looking into different simulation and physics engines that have more documentation and support around ML and RL, such as MuJoCo and Gazebo.

Next steps

Once I determine a more appropriate simulation engine, I will begin testing RL approaches including basic policy search, policy gradient, and guided policy search. Following a proof of concept with a single robotic agent in simulation, I will transfer the optimal policy to the physical robot and do further training in the real world.

In the second phase, I will explore the interaction between multiple robots. In one scenario, robots would have the same base body but different abilities. One robot may have more finely tuned sensors while the other may have more advanced manipulation tools. Through interaction, the robots would learn about each other’s strengths and negotiate roles to complete the task. I will test alternative reward structures to study how this may affect behavior and interaction between robotic agents.